The Art of Testing the Untestable

David Tzemach

Posted On: November 18, 2022

![]() 9141 Views

9141 Views

![]() 10 Min Read

10 Min Read

It’s strange to hear someone declare, “This can’t be tested.” In reply, I contend that everything can be tested. However, one must be pleased with the outcome of testing, which might include failure, financial loss, or personal injury. Could anything be tested when a claim is made with this understanding?

I had heard this comment in a somewhat different context, and now that I’ve spent longer in a testing position, I’m reconsidering what it may imply. My testing roles, if nothing else, teach communication skills and patience. When someone approaches you and makes this comment, what else are they expressing about the product under test, or maybe about themselves?

When you’re informed something isn’t testable, take a big breath and open up a discussion with the individual. The discussion is not about why something is not testable—at least not explicitly. It is a dialogue to better comprehend what someone is going through, to investigate alternate interpretations of facts, and to assist in making the untestable testable.

Is it a fact? or just a Conclusion

My thought process starts with a tester’s reason for commenting. I’d want to investigate his thinking along those lines. I’m not going to defend the product or its testability. Rather, I’m interested in determining if the tester is making a fact or making a conclusion. A fact serves as a platform for further discussion. It specifies where we begin on the testability spectrum. “This can’t be tested” is presumably on the end of the scale. In this situation, I want to put this tester’s assertion to the test by asking him about the product knowledge he has and his thoughts on what cannot be tested.

Likewise, if he is forming a conclusion, what knowledge is he relying on? Perhaps he tried to assess some function and found it difficult to get a result, so he says, “This can’t be tested.” My goal is to explore and understand his perceptions.

Let’s examine statements and conclusions using the following examples.

Root Cause: Test Conditions

A few years ago, I managed a global testing project with many great testers who were involved, but I remember one specific tester that point out but not positively. In almost any testing task he was given, the first thing that the claimed is that “I cannot test it because I don’t have the right test conditions”, that made me curious so I started to explore this person’s test conditions through the following questions:

- Do you have any dependencies that block you?

- Do you have issues with the test environment?

- Do you have the new code with a stable build?

- Do you need any help setting up specific test scenarios?

- Do you need any help setting up specific product flows?

- Is there an opportunity to perform negative testing?

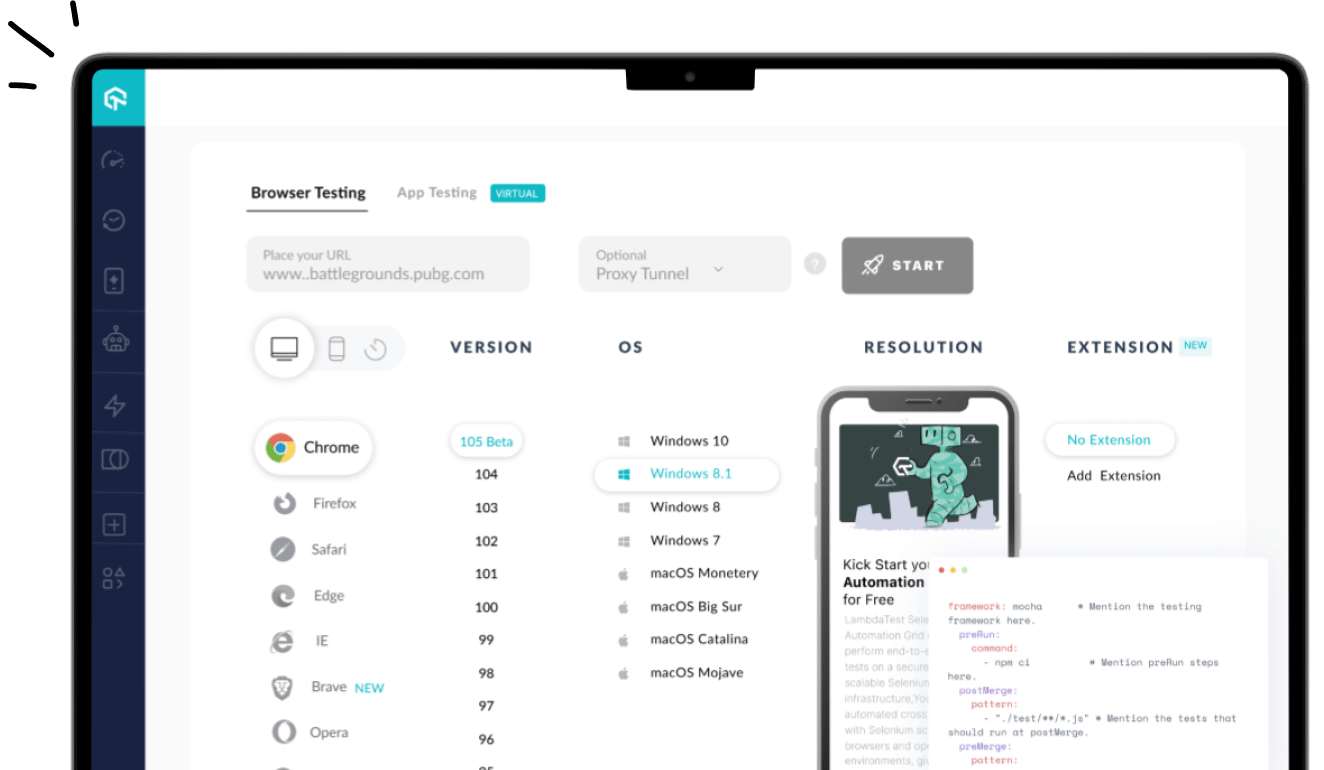

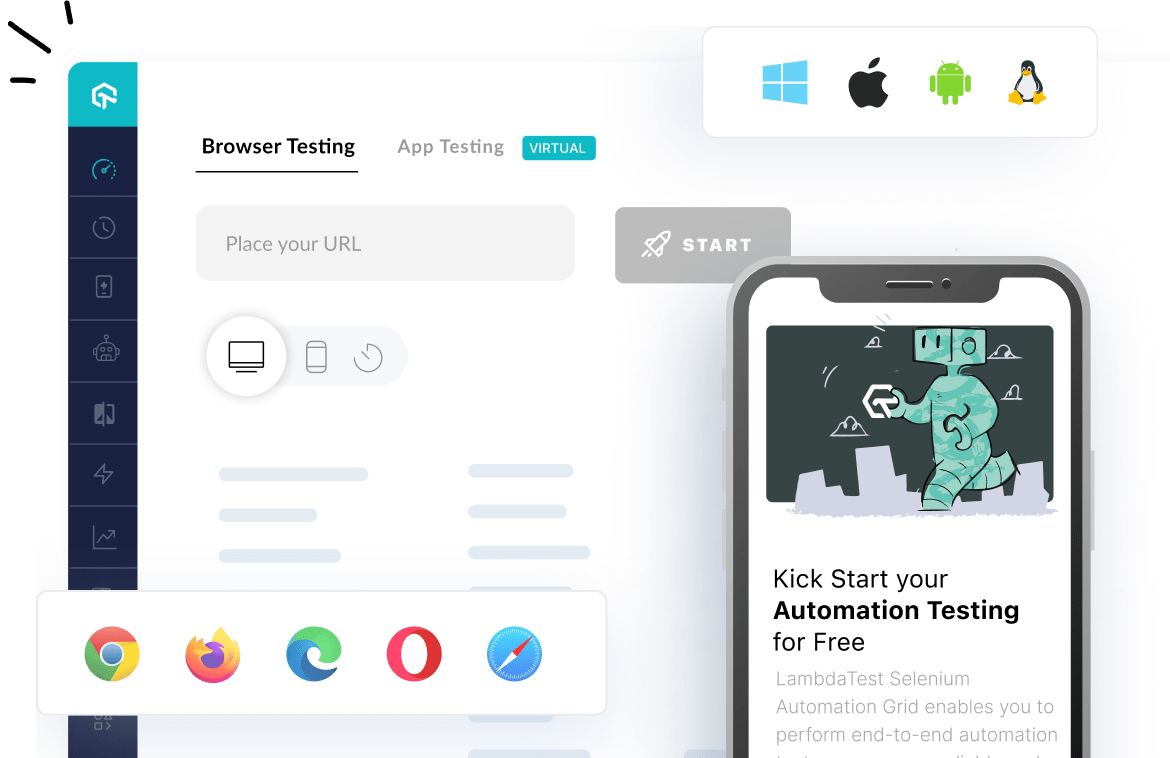

In such cases, using a cloud-based testing platform like LambdaTest can be a game-changer. It allows teams to instantly access thousands of real browser and OS combinations—eliminating delays due to physical setup or environment inconsistencies.

LambdaTest is an AI-powered test orchestration and execution platform that enables both manual and automated testing at scale across 5000+ real devices, browsers, and operating system combinations.

It empowers teams to simulate various test conditions effortlessly, without the overhead of environment management—streamlining testing and accelerating feedback loops.

Root Cause: it’s not a production environment

The distinctions between non-production (a test environment) and production environments are frequently given as reasons why particular features cannot or should not be tested. These distinctions include both functional and non-functional tests (Load, Endurance performance, and so on).

In production, there may be automated processes that the tester must do manually in a test environment. The production data represents real customers and real circumstances that may or may not occur in the test environment, and the data may contain sensitive data. Production hardware is designed for high performance and is typically clustered. Finally, separate credentials may be required for apps to connect to databases and take information from the Internet.

Many of these distinctions are product risks that development teams must be aware of. To decrease these risks, you must minimize differences by questioning yourself the following questions: Is it possible to automate manual tasks for a single day? Is it possible to mine and cleanse production data for usage in test settings, or is it necessary to simulate it? Is it advantageous to construct test environments with infrastructure that is compatible with production environments and clustering? To minimize the impact on the application during major deployments, can the resource access in the production environment be assessed?

Root Cause: Embedded Code

While we’re on the subject of scaffolding code or other highly embedded functionality, I occasionally find that a module is tough to perform software testing since it is so deeply embedded. The same might be stated for occasional procedures (month-end processing) or lengthy operations. Deeply embedded functionality can include programs that are several levels deep in a hierarchy or even a difficult-to-reach component on an automobile engine.

In many circumstances, generating evidence of operation is challenging. There might be evidence that suggests the procedure took place. If there is no proof, I propose that you experiment to see if the procedure is ever implemented (for example, adding logs to demonstrate the execution).

Root Cause: We cannot accept the risk since the test is too dangerous

I’ve assessed a few products that have some inherent risk in their normal use. It is obvious that testing rocket engines are not the same as testing a gaming application, the methods involve some danger for both the tester and the product. Even if these goods are less testable, my project manager and I consider whether to test them and how much risk to take. In other words, what are the likely results of this product’s use if a particular test is not carried out? Let’s take the following example:

“I won’t be able to test this DELETE method since it erases the whole of the database.”

I question how we might reduce the risk while still conducting a test that produces reliable and relevant data.

- Can we use tools to mock transactions instead of triggering them?

- Is it possible to use mock to imitate the database?

- Can we develop a similar structure and feed it with data from the real table in the same database?

- Can we intercept the query to examine its syntax? (Before we carry it out)?

Alert your development team when you do this kind of testing. They can be prepared for unusual occurrences in the environment, anticipate additional challenges, or even engage in a bug hunt.

Root Cause: Too small/Basic to Test

As a tester, I’ve heard it said more frequently than I have as a developer that a change is too easy to test. The modification can seem straightforward to the claimant—just one line of code or a configuration file update. Other straightforward adjustments include adding a phrase to an error message, fixing the spelling of a text that customers see, or adding contact information. Simple adjustments might lead to mistakes. I’ve encountered occasions where the text in the error message suddenly has a spelling problem or updated contact detail is no longer in service. After experiencing several of these, spelling and “easy modifications” have become two of my top-rated scenarios. Also, while they may pass the code review, a better test is to inspect them after they have been implemented.

Root Cause: Frustration

Once, a frustrated tester reached me and stated, “This can’t be tested!” While my initial impulse was to assist with testing, I attempted to be empathic first. “Perhaps it’s untestable,” I speculated. I discussed the technical difficulties with that specific function as well as other aspects that influence testability. This examination will reduce a person’s frustration, divert his attention away from the work at hand, and readily engage him in a discussion.

I began a collaborative investigation of the product being tested. Remember to begin simply—verify that the product has been deployed. How many times have you begun evaluating something just to learn that it was not included in the previous build? Discuss the test’s objective and how the test plan works to understand behavior and identify problems. Is the plan in line with the product’s business expectations?

With this as a baseline, I discussed his experiences and how he came to his conclusions. We used data from the application to validate our observations (database records, log files, screenshots, etc.). I kept checking along the way to see if any amount of testing was emerging and then went in that direction. When our combined efforts achieved minimal results, we brought a developer to our discussion and explored ways to improve the product’s transparency.

Root Cause: Facing challenging personalities

On Another occasion, I worked on a project with a developer who, while talented, was not always tester-friendly. This individual contacted me once and declared, quite arrogantly, that his code was not testable. He advised me not to spend my time with it.

If you find yourself in this circumstance, you should start the dialogue by asking what the individual has done to make the product untestable. Inevitably, questions must be posed to politely suggest that if the product is released for use without being tested or under-tested, there is a risk of failure (the extent of failure depends on the product—I’m certain that we can all assume sufficiently large product defects) as well as the potential for consequence. My chat aims to ascertain this person’s comfort level if such a situation is likely to play out.

Alternatively, the discussion might focus on the motivation for lowering the product’s testability. While it may appear contradictory to decrease testability, an investigation may uncover security vulnerabilities, time constraints, ego (be cautious when examining this), or other variables beyond the person’s control. Regardless, as a tester, you can disclose the risk of not reviewing all or a portion of a product.

Root Cause: Different Points of View on Testing and Testability

During one project, I talked with the developer about some development activity and suggested she release the code so I could check it. She responded that there was nothing to test. I was wondering about the development we just talked about and how she had worked hard hours over the prior month in the back of my mind. “Is there genuinely nothing to test for that amount of time and effort?” I questioned.

I expressed my concerns to my project director, and we proceeded to work without the review I recommended. We discovered errors and missing requirements when testing the code. It’s a tragic and frequently told story, but as we examined our achievements and possibilities after the incident, I discovered a fresh perspective.

To establish her path of development, the developer experimented and created prototypes. Her triumphs along the road encouraged them to conduct further research. She saw her work as scaffolding—code that would assist in the creation of other code. She also thought that scaffolding wasn’t worth testing.

As a consequence of this discussion, we worked with her on the following releases to review the scaffolding code early. I requested to study the code in better understand how it was merged into the final product. The testing team also offered input on discrepancies in expected functionality and user interface elements. We did not begin open bugs (since we were looking at an ongoing development) but instead offered observations to changes in the product—an iterative idea that many agile teams are experienced with.

Got Questions? Drop them on LambdaTest Community. Visit now